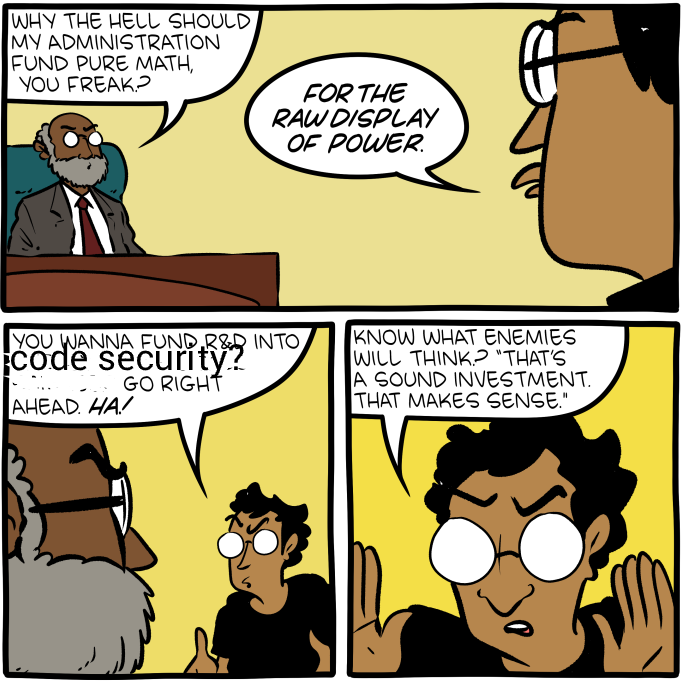

We're a small contractor bridging the cultures between AI and formal methods, motivated by threat models in which AI capabilities don't stop going up.

Step one is evals and RL environments in proof engineering, for defensive acceleration reasons.

We should use formal methods to buy last minute slices of swiss cheese, especially in critical infrastructure.

What we do (public facing)

Papers

- (Forthcoming) FVSpec

- A benchmark for vericoding: formally verified program synthesis

- Proving the Coding Interview: A Benchmark for Formally Verified Code Generation

Comms/movement stuff

- Can we secure AI with formal methods?

- FMxAI 2025

- Cookbook

- Cruxes for AI Control via Proof Carrying Code

Organizations

- Beneficial AI Foundation (BAIF)

- Galois - Contracting on ARIA's Mathematics for Safe AI program

Let us know how we can help

quinn@for-all.dev